XMPP Newsletter Banner

Welcome to the XMPP Newsletter, great to have you here again! This issue covers the month of May 2026.

The XMPP Newsletter is brought to you by the XSF Communication Team.

Just like any other product or project by the XSF, the Newsletter is the result of the voluntary work of its members and contributors. If you are happy with the services and software you may be using, please consider saying thanks or help these projects!

Interested in contributing to the XSF Communication Team? Read more at the bottom.

XSF Announcements

XMPP Summit 29

The XMPP Standards Foundation (XSF) is excited to announce the 29th XMPP Summit, the first XMPP Summit to take place fully online! The XMPP Summit will be held from Friday 4th September to Saturday 5th September 2026, both days between 13:00 - 16:00 UTC. The XSF invites everyone interested in development of XMPP technologies to attend, and discuss all things XMPP remotely!

XMPP Events

-

XMPP Sprint in Berlin (DE / EN): will take place in June, from Friday 19th to Sunday 21st 2026, at the Wikimedia Deutschland e.V. offices in Berlin, Germany. If this sounds like the right event for you, come and join us! Just make sure to list yourself here, so we know how many people will attend and we can plan accordingly. If you have any questions or concerns, join us at the chatroom: sprints@muc.xmpp.org!

-

XMPP at FOSSY 2026: This year’s edition of FOSSY, the fourth Free and Open Source Software Yearly conference, will take place during the month of August, from Thursday 6th to Sunday 9th 2026 at the University of British Columbia, Vancouver, Canada. As always, there will be an XMPP Track. The call for proposals is closed as of June 2nd, 2026. Once again, this year JMP is pleased to do its annual offer for funding to the potential speakers who would like to host a talk on the XMPP track.

Videos and Talks

- Independent Messaging (XMPP) unter iOS (Monal), by eversten.net for XMPP Tutorials DE. [DE] ([EN],[ES] subtitles available).

- Independent Messaging (XMPP) unter Android (Snikket), by eversten.net for XMPP Tutorials DE. [DE] ([EN],[ES] subtitles available).

- Independent Messaging (XMPP) unter Android (Conversations), by eversten.net for XMPP Tutorials DE. [DE] ([EN],[ES] subtitles available).

- Conversations (XMPP): Feature-Preview für Version 2.20.0, by eversten.net for XMPP Tutorials DE. [DE]

XMPP Articles

- EnvsBot XMPP Helper Bot for the envs pubnix - BETA,a modular XMPP bot built with Python 3 and slixmpp developed with the envs pubnix environment in mind but not limited to it, by ~dan for ~dan’s website on envs.net blog.

- Pra esse passaporte vai ter fila, by isadora for the isaCloud diario-de-bordo. [PT_BR]

- First 2026 Goal Reached! What’s Next? , by Timothée Jaussoin for the Movim Blog.

- What’s your oldest Openfire deployment?, by guus for the Ignite Realtime community.

- ¿Qué es XMPP: mensajería federada sin depender de WhatsApp?, by elenamusk for the Tuiter.Rocks blog. [ES]

XMPP Software News

XMPP Clients and Applications

- aTalk has released versions 5.4.0, 5.5.0 and 6.0.0 of its encrypted instant messaging with video call and GPS features for Android. This versions bring a whole lot of ‘under the hood’ changes. Please refer to the release notes for all the details.

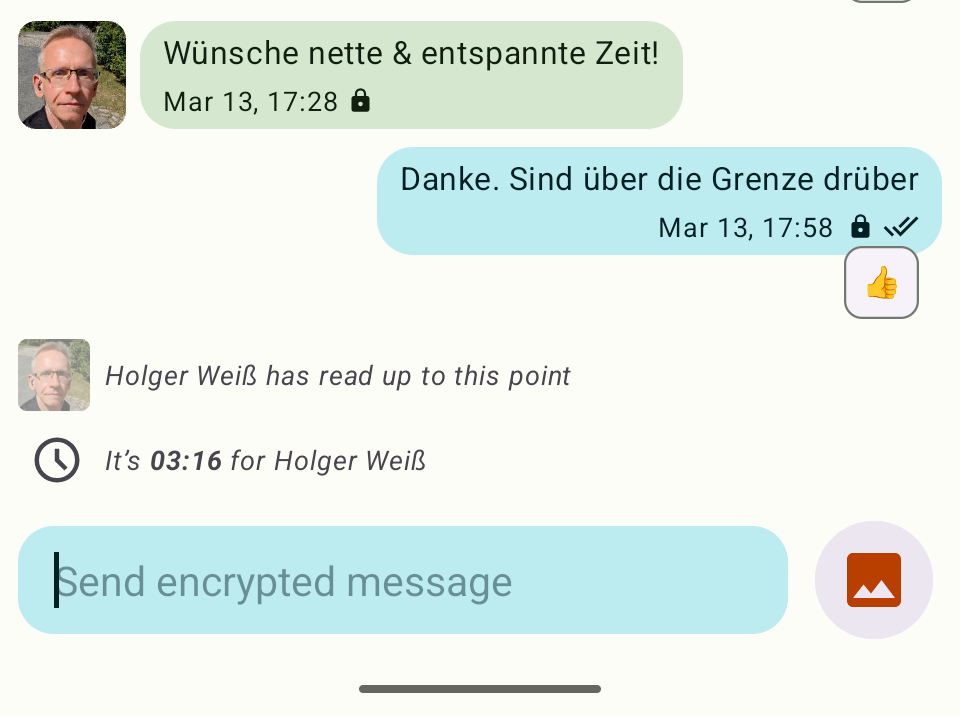

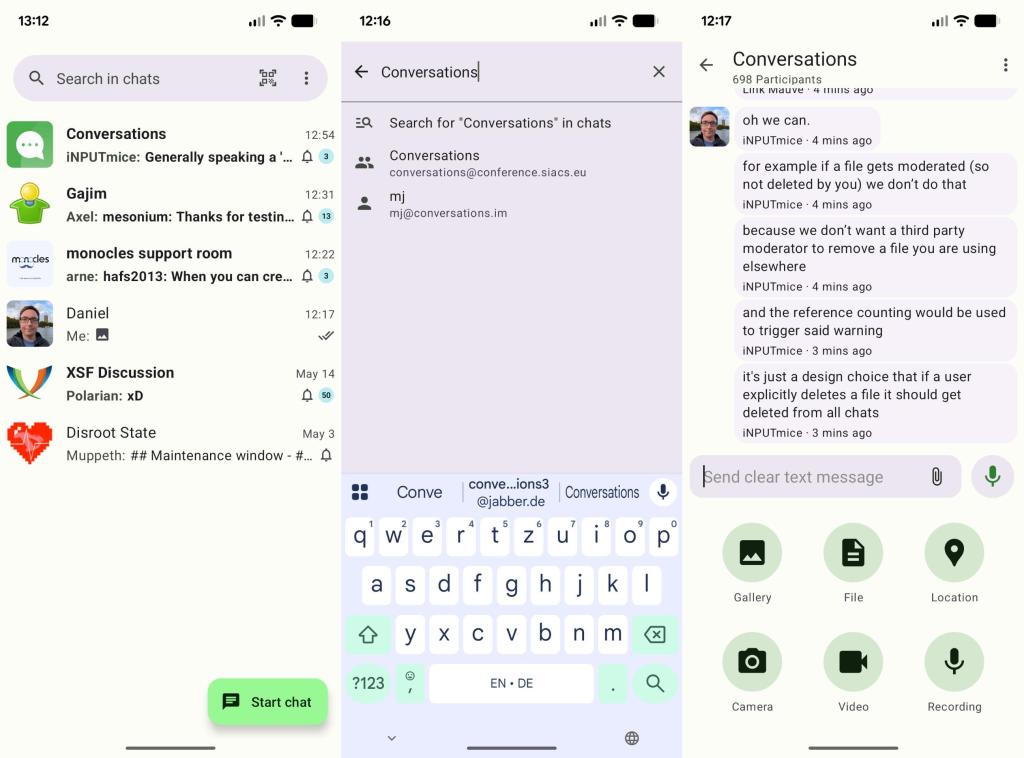

- Conversations has released versions 2.19.16 and 2.20.0 for Android. These releases bring some significant UI work, an improved media browser with ability to share and delete media files, store media internally by default (with setting to automatically save to gallery), send audio recordings immediately instead of attaching (plus setting to restore the old behavior),

Show QR codethat now includes preauth parameter on servers with easy invites, and a search bar for easier access to searches from the home screen. Make sure to take a look at the changelog for all the details!

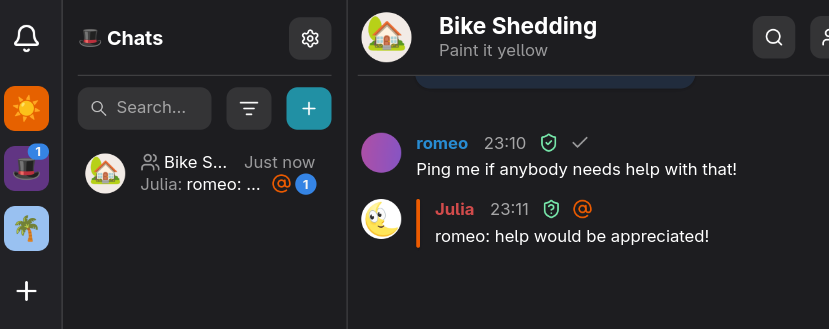

Conversations: A prominent search bar on the main screen and a reworked media attachment flow.

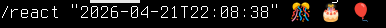

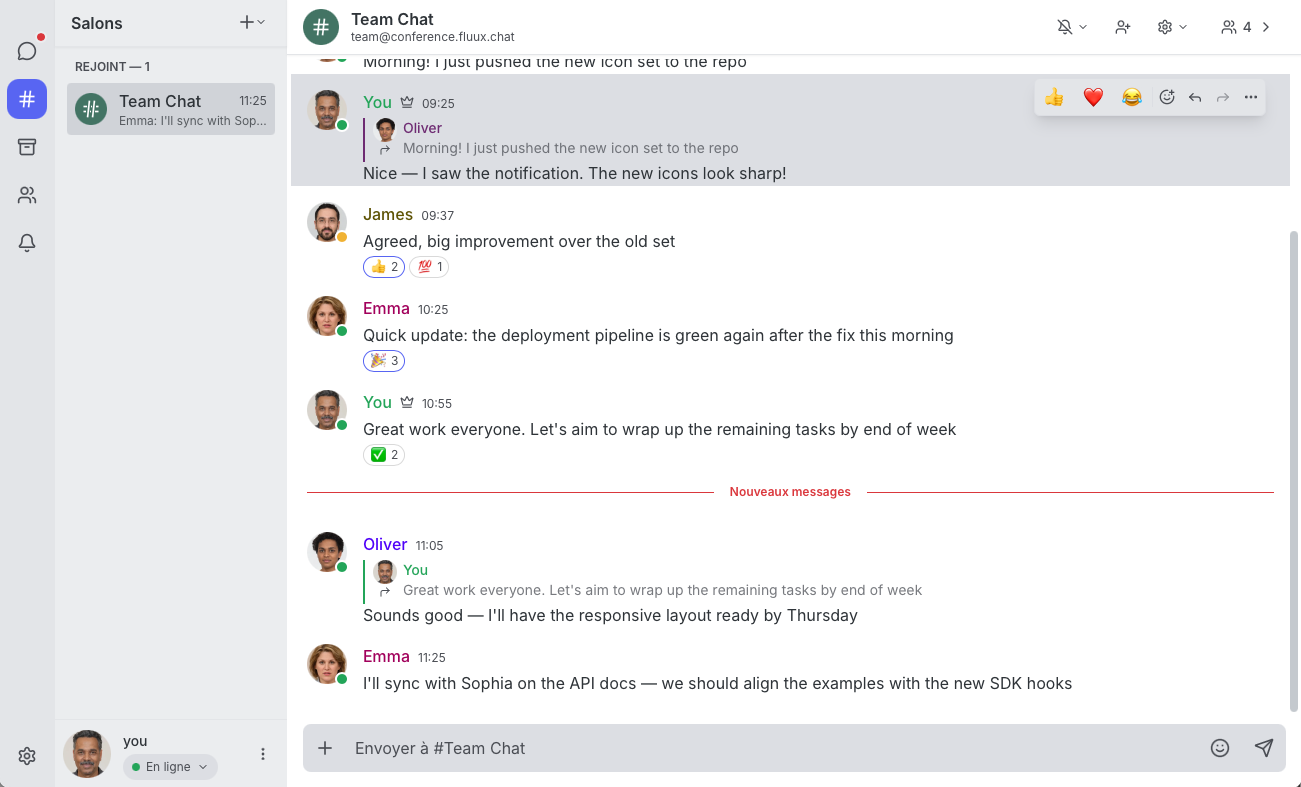

- Converse.js has released versions 13.0.0 and 13.0.1 of its open-source web-based XMPP chat client, with various additions, improvements and bugfixes. These versions add support for message reactions allowing users to react to messages with emojis, message replies to specific messages, autocomplete of possible XMPP providers when registering a new account, half a dozen fixes and quite some work under the hood!. Head over to the changelog for the all the details!

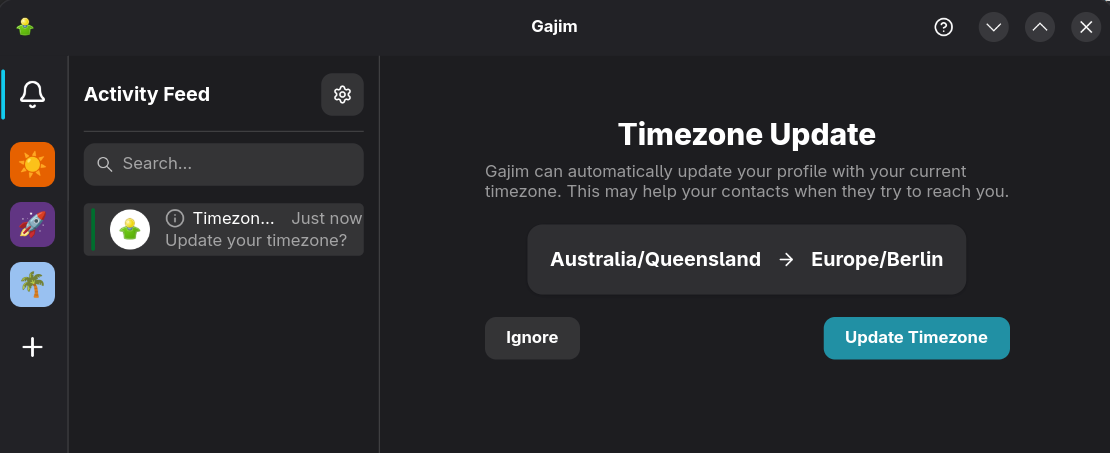

- Monal has released version 6.4.21 for iOS and macOS. This version now shows last usage timestamp of OMEMO keys, improves location sharing accuracy and contact last activity timestamps, and it also fixes several bugs!

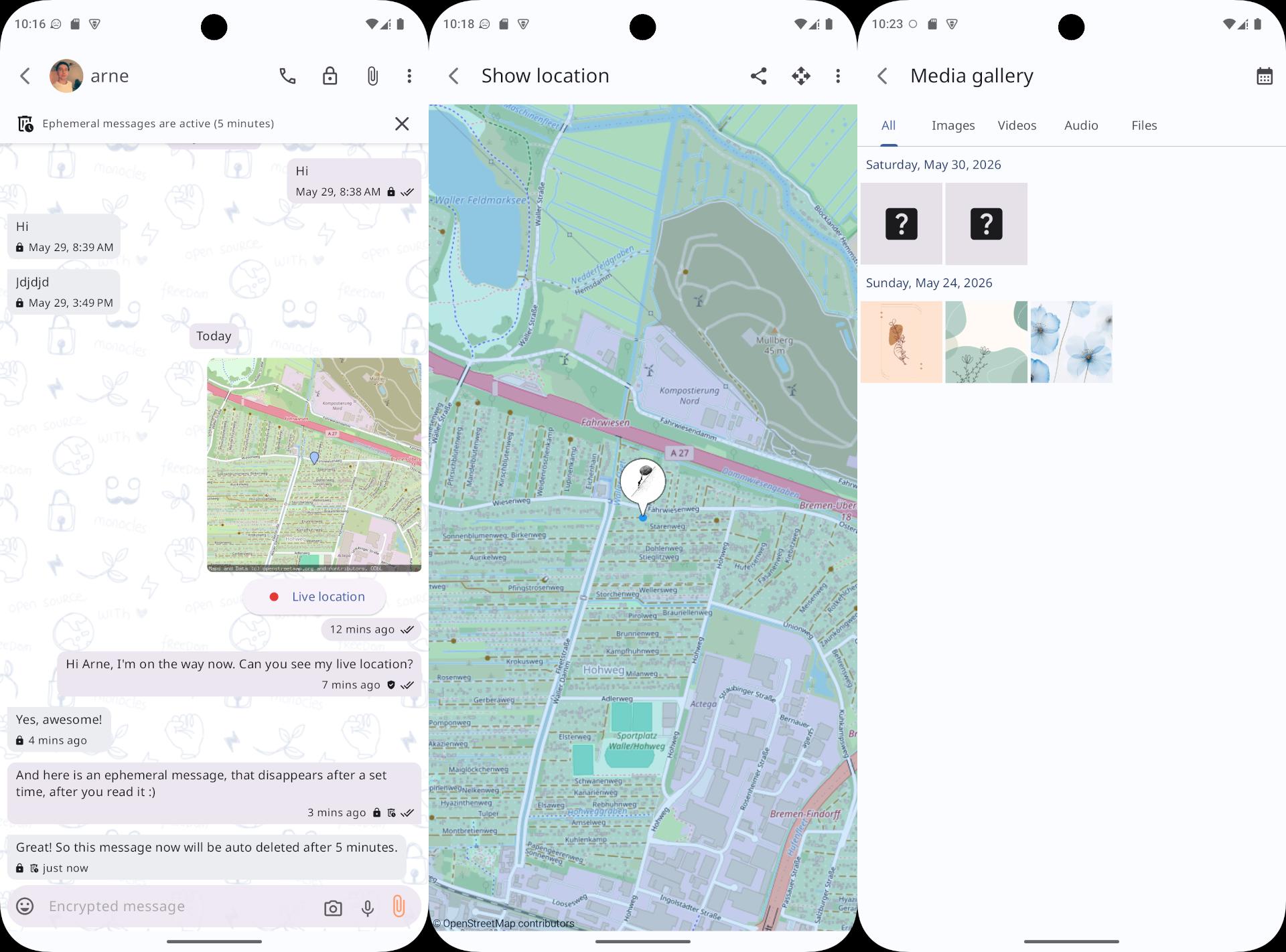

- Monocles has released versions 2.1.6, 2.2, 2.2.1, and 2.2.2 of its chat client for Android. These versions introduce a large amount of new features, implementations, and bug fixes that is way larger than we could list in here. Among the more prominent highlights you’ll find ephemeral messages, live location sharing, improved create and restore backup, use internal hidden storage by default, local database encryption, improved backup encryption & reliability, secure deletion & migration recovery, UI/UX improvements, media gallery, and a lot more! Make sure to check the links for all the details!. Note: due to some delays with the Play Store updates, these releases are only available through F-droid for the time being.

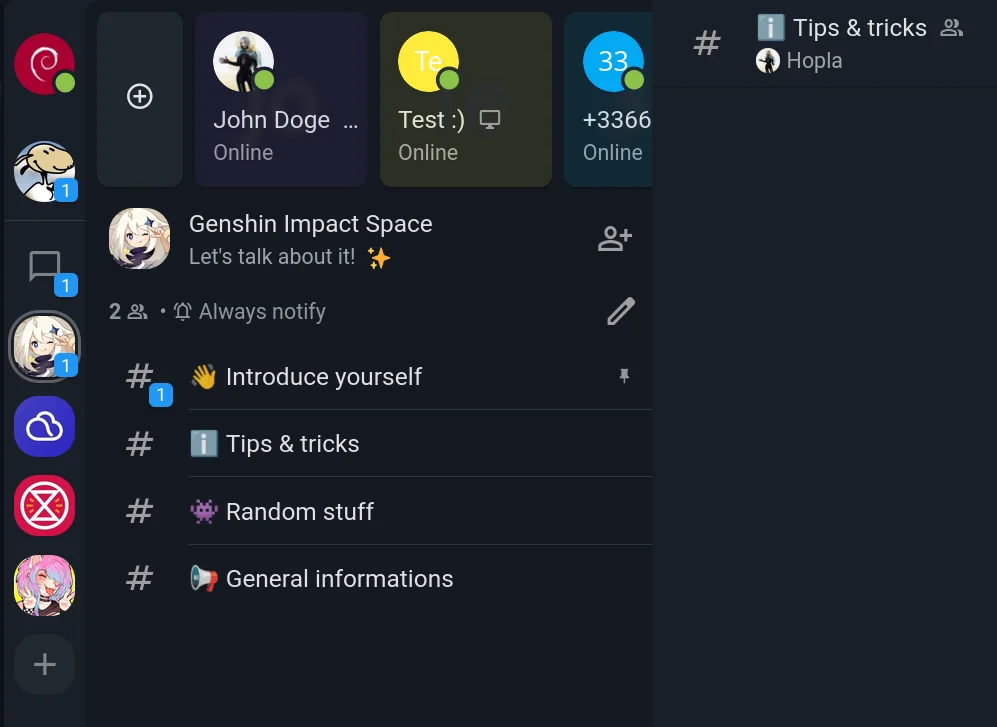

Monocles Chat: Ephemeral messages, live location sharing, and the media gallery!

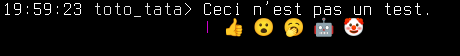

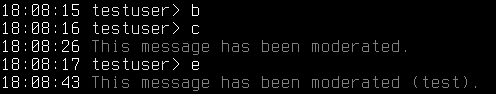

- Poezio has released version 0.18 of its console client for XMPP. This new release has mostly internal improvements, with some new features like message retraction at the sending side, various improvements to the

reactplugin, notably a way to convert:emoji:shortcodes to real emojis, and a new way of showing or hiding groupchat presences along with a few other improvements and fixes. You can find all the details in the link to the release. - Profanity has released version 0.18.1 of its console based XMPP client. This release brings bug fixes to connect with an empty resource, resolves issues with async editor, resolves DB migration failures, and restores message receipt requests when capabilities are unknown, among many other things. Please make sure to read the changelog for all the details!

XMPP Servers

- MongooseIM has released MongooseIM 6.7.0 with more additions, changes and fixes than what we can reasonably list in here! Make sure to read the changelog for all the details!

- The Ignite Realtime community is happy to announce the release of Openfire 5.0.5. The full changelog has more details with the highlights being bug fixes and bundled library updates whilst they continue to work on an upcoming 5.1.0 feature release.

- Prosody IM is pleased to announce version 13.0.6, a new minor release from the stable branch. This release fixes a handful of bugs which were discovered and fixed since the 13.0.5 release. Most of these are minor, but a few of them are important fixes. Make sure to read the changelog for all the details!

XMPP Libraries & Tools

- go-xmpp versions 0.3.3 and 0.3.4 have been released.

- go-sendxmpp, a tool to send messages to an XMPP contact or MUC inspired by sendxmpp, versions 0.15.6 and 0.15.8 have been released. Full details in the changelog.

- jabber.el, the XMPP client for Emacs, versions 0.10.7, 0.10.8, 0.10.9 and 0.10.10 have been released. Full details in the changelog.

- libomemo.js, a TypeScript implementation of the OMEMO Multi-End Message and Object Encryption protocol for XMPP, version 0.0.1 has been released. You can read the full changelog for all the details. Note: This version still targets XMPP OMEMO version 0.3.0. Support for the latest version of OMEMO will be added in a subsequent release.

- mellium.im has released version 0.23.0 of xmpp, an XMPP library written in Go. You can check out the changelog for all the details.

- picomemo, a compact and portable implementation of the cryptography required for XMPP’s OMEMO (E2EE), version 1.2.0 has been released. Read the details in the changelog.

- slidgnal, a feature-rich Signal to XMPP puppeteering gateway, based on slidge and signalmeow, version 0.3.0beta11 has been released. You can read the intermediate changelog from 0.3.0beta10 to 0.3.0beta11 for all the details.

- slixmpp, the MIT licensed XMPP library for Python 3.7+ version 1.15.0 has been released. You can read the official release announcement for all the details.

- xmpp-ap-bridge, a lightweight Fediverse to XMPP implementation based on client bots to enable chat-like conversations between any Fediverse application and any XMPP client from your usual client applications, versions 0.8.0 and 0.8.1 have been released. You can read about all the details on the releases page.

Extensions and specifications

The XMPP Standards Foundation develops extensions to XMPP in its XEP series in addition to XMPP RFCs. Developers and other standards experts from around the world collaborate on these extensions, developing new specifications for emerging practices, and refining existing ways of doing things. Proposed by anybody, the particularly successful ones end up as Final or Active - depending on their type - while others are carefully archived as Deferred. This life cycle is described in XEP-0001, which contains the formal and canonical definitions for the types, states, and processes. Read more about the standards process. Communication around Standards and Extensions happens in the Standards Mailing List (online archive).

Proposed

The XEP development process starts by writing up an idea and submitting it to the XMPP Editor. Within two weeks, the Council decides whether to accept this proposal as an Experimental XEP.

- TLS Channel-Binding Downgrade Protection

- This specification provides a way to secure the SASL and SASL2 SCRAM handshakes against channel-binding downgrades through TLS version downgrades.

New

- No new XEPs this month.

Deferred

If an experimental XEP is not updated for more than twelve months, it will be moved off Experimental to Deferred. If there is another update, it will put the XEP back onto Experimental.

- No XEPs deferred this month.

Updated

- Version 0.2.0 of XEP-0449 (Stickers)

- Make pack attribute explicitly a

MAY. Add more details about sending/receiving stickers without a sticker pack. (lmw)

- Make pack attribute explicitly a

- Version 0.2.0 of XEP-0463 (MUC Affiliations Versioning)

- Use a dedicated child element of

<x/>rather than namespaced attributes. (lmw)

- Use a dedicated child element of

- Version 0.3.0 of XEP-0503 (Server-side spaces)

- Fix pubsub node field name in room disco info.

- Specify how to attach images (avatar and banner) to a space. (nc)

Last Call

Last calls are issued once everyone seems satisfied with the current XEP status. After the Council decides whether the XEP seems ready, the XMPP Editor issues a Last Call for comments. The feedback gathered during the Last Call can help improve the XEP before returning it to the Council for advancement to Stable.

- No Last Call this month.

Stable

- No stable XEPs this month.

Deprecated

- No XEPs deprecated this month.

Rejected

- No XEPs rejected this month.

Spread the news

Please share the news on other networks:

- Mastodon

- Movim

- Bluesky

- YouTube

- Lemmy instance (unofficial)

- Reddit (unofficial)

- XMPP Facebook page (unofficial)

Subscribe to the monthly XMPP newsletter

SubscribeAlso check out our RSS Feed!

Looking for job offers or want to hire a professional consultant for your XMPP project? Visit our XMPP job board.

Newsletter Contributors & Translations

This is a community effort, and we would like to thank translators for their contributions. Volunteers and more languages are welcome! Translations of the XMPP Newsletter will be released here (with some delay):

-

Contributors:

- To this issue: emus, cal0pteryx, Gonzalo Raúl Nemmi, Ludovic Bocquet, XSF iTeam

-

Translations:

Help us to build the newsletter

This XMPP Newsletter is produced collaboratively by the XMPP community. Each month’s newsletter issue is drafted in this simple pad. At the end of each month, the pad’s content is merged into the XSF GitHub repository. We are always happy to welcome contributors. Do not hesitate to join the discussion in our XSF Communications Team group chat (MUC) and thereby help us sustain this as a community effort. You have a project and want to spread the news? Please consider sharing your news or events here, and promote it to a large audience.

Tasks we do on a regular basis:

- gathering news in the XMPP universe

- short summaries of news and events

- summary of the monthly communication on extensions (XEPs)

- review of the newsletter draft

- preparation of media images

- translations

- communication via media accounts

Unsubscribe from the XMPP Newsletter

For this newsletter either log in here and unsubscribe or simply send an email to newsletter-leave@xmpp.org. (If you have not previously logged in, you may need to set up an account with the appropriate email address.)

License

This newsletter is published under CC BY-SA license.